Seedance 2.0 is Overhyped

The generative AI video model caused quite a stir after a synthetic video of Brad Pitt fighting Tom Cruise went viral. If you take a closer look, however, it’s not ground breaking.

Gen AI is trained on available data, including the likeness of major celebrities, brands, and existing IP. It makes sense that a competitive model would be able to replicate such well known actors- it should be able to.

As a video verification journalist focused on debunking synthetic content, I can report that even with all the noise around Seedance, it has similar fidelity issues compared to its competitors (Sora 2 (RIP) and Veo 3.1).

I dive into the reality of these models in Storyful’s latest version of Verified on Substack (and below). ⬇️🤖

The landscape:

Ambitious companies are jockeying for position as we enter a new era of content creation with a focus on generative AI. These companies market their models as artistic outlets, places for users to fulfill creative endeavors from the cinematic to the ridiculous. However, not all users employ these models in a purely artistic manner. Bad actors will use these tools. They already are.

At Storyful, we’ve seen a measurable uptick in AI user-generated content circulating on social platforms, a lot of it timed to breaking news events or viral moments. We have seen a 60 percent increase in video verification requests since the start of the US-Israel war with Iran in particular.

There is good news, however. The three leading AI video models — OpenAI’s (soon to be defunct) Sora 2, Google’s Veo 3.1, and ByteDance’s Seedance 2.0 — while more capable than their predecessors, share some fundamental weaknesses. Understanding those weaknesses is still your best line of defense.

The promise:

Over the past three years, generative AI video has moved quickly, from the early, obviously broken visuals to footage that is more controlled, more physics-aware, and harder to dismiss at a glance. OpenAI, Google, and ByteDance have each released updated models within a six-month window, each claiming their iteration is closer to replicating the real world than the last.

A closer look tells a different story.

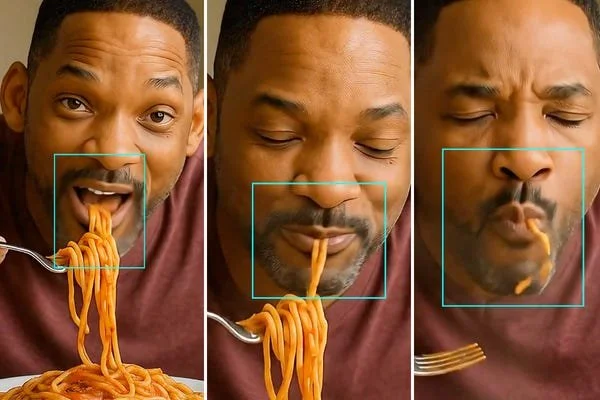

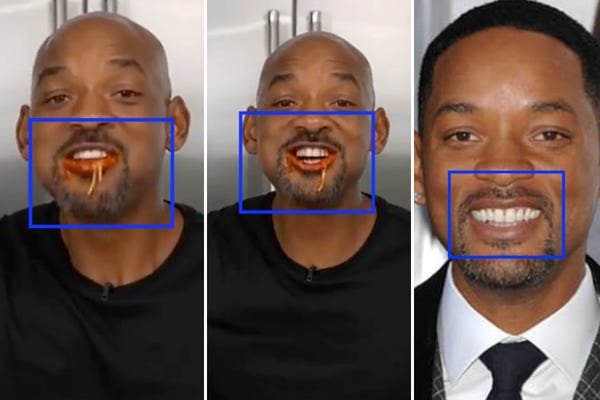

Using the Will Smith spaghetti clip as a consistent benchmark, it’s become a widely accepted test for measuring AI video progress, here’s where each model stands:

The reality:

Sora 2: Video here

OpenAI recently announced they were scrapping Sora, however, it’s still worth mentioning in this context. This clip was the weakest of the bunch. The spaghetti is full of static, one of the telltale signs of a video generated by Sora. The audio does not match up with his facial movements, which are relatively realistic. A noodle suddenly breaks into several pieces, indicating issues with physics.

Veo 3.1: Video here

Obviously smooth features and cartoonish audio. The spaghetti magically morphs from four pieces to two, then drops back into the bowl. As the character slurps the spaghetti, a noodle breaks apart and disappears into his chin, indicating issues with physics and reality.

Seedance 2.0: Video here

Seedance 2.0 is the most convincing of the three but it isn’t perfect.

A Seedance 2.0 video of Brad Pitt fighting Tom Cruise went viral days after the model was released but the reaction was outsized compared to the model’s capabilities. Soon after, ByteDance received a cease-and-desist letter from Paramount, and Disney sent a takedown demand alleging intellectual property infringement.

Much more convincing than previous iterations, but there are still some problems related to physics and realism. A noodle falls from his fork, onto the lip of the bowl, and breaks apart (not how cooked noodles function in the real world). Two noodles can be seen hanging from his mouth, they magically turn into a single piece that then somehow stays attached to his face as he talks. Check out his teeth, they are: All the same height (not accurate to his real teeth) and show quite a bit of grain/static.

The good news:

These models are good, but not seamless…you have time to build up your defenses.

For video desks and audience teams, the practical implication is that the detection signals that flagged AI footage a year ago are less reliable now. The obvious tells like floating hands, flickering lights, and distorted faces are largely gone. What remains requires frame-by-frame scrutiny looking for physics anomalies, text inconsistencies, audio sync issues, and the absence of any corroborating source or eyewitness account.

Not every new release deserves boisterous applause or resounding condemnation. What it deserves is a clear-eyed assessment of where the technology actually stands and a newsroom that’s prepared for where it’s going.

Sora 2, Veo 3.1, and Seedance 2.0 are each incrementally better than what came before. Each new iteration narrows the gap. However, at this point, none of them can reliably replicate the physical world under the kind of scrutiny a newsroom should apply.

P.s.

OpenAI terminates Sora

OpenAI abruptly announced plans to terminate its video generation model Sora on March 24. The decision ends a $1 billion investment deal between Disney and OpenAi. While Disney had not yet invested, Reuters reported that teams at both companies were working together as recently as March 23.